Optimize

Optimize models for the highest performance with sparsity regularization and quantization aware training. Fine-tune existing models or train from scratch.

Supports sparsely connected models

Only stores and computes on weights that matter

10x improvement in speed, efficiency, and memory

Supports sparse activations

Skips computation when a neuron outputs zero

10x increase in speed and efficiency

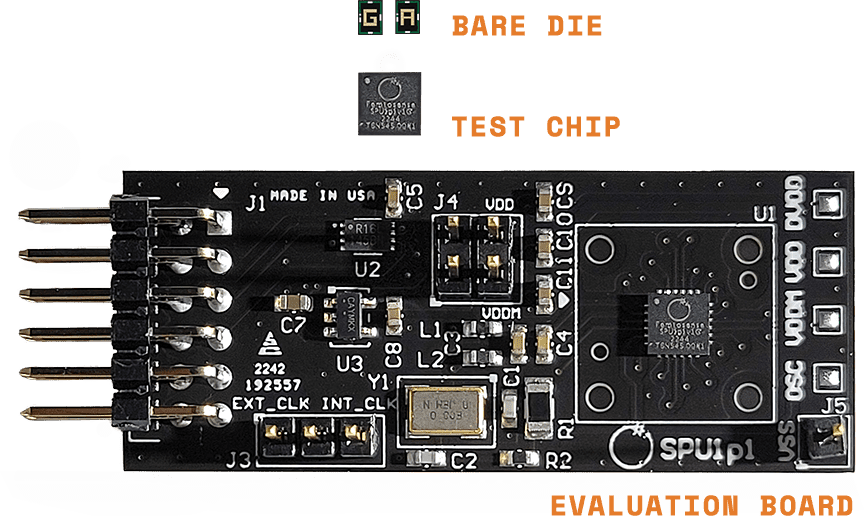

Femtosense’s dual sparsity design consumes much less energy than existing approaches by using only a fraction of active neurons.

Our SDK supports PyTorch, TensorFlow, and JAX, so developers can get started with minimal barriers. The SDK provides advanced sparse optimization tools, a model performance simulator, and the Femto compiler.

Start with existing models : Deploy TensorFlow, PyTorch, and JAX models to the SPU without fine-tuning. Achieve high efficiency for dense models, and even higher efficiency for sparse models.

Notice

This website stores cookies on your computer. These cookies, as specified in our Privacy Policy, are used to collect information about how you interact with our website. We use this information in order to improve and customize your browsing experiences.

Use the “Accept” button to consent to the use of such technologies. Use the “Reject” button to continue without accepting.